Today, the biggest news comes from Iran.

A new era of warfare is emerging at a pace that feels almost unreal, with algorithms now modelling the tempo and scale of military action in ways once reserved for science fiction. However, beneath the spectacle of technological power lie unresolved risks that challenge long‑standing assumptions about control and responsibility.

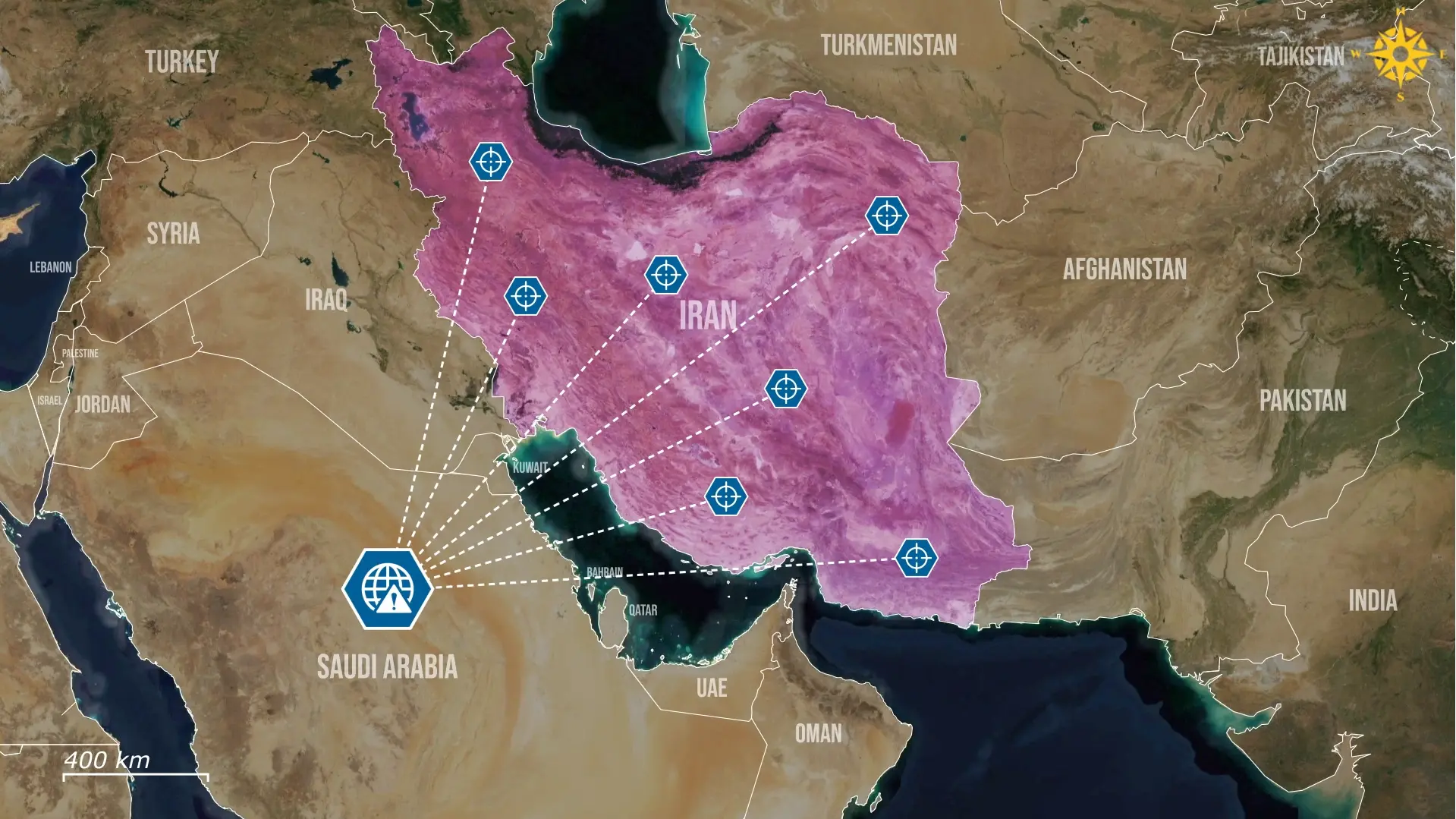

The United States used an integrated AI-driven targeting architecture to identify and strike roughly 1,000 targets in Iran during the first 24 hours of the conflict. Such a scale of action would previously have required weeks of staff work and lengthy analytical cycles.

This astonishing acceleration was enabled by the pairing of the Maven Smart System for surveillance with Anthropic’s Claude AI model. Together, they processed intelligence inputs and produced prioritized target lists at an unprecedented pace.

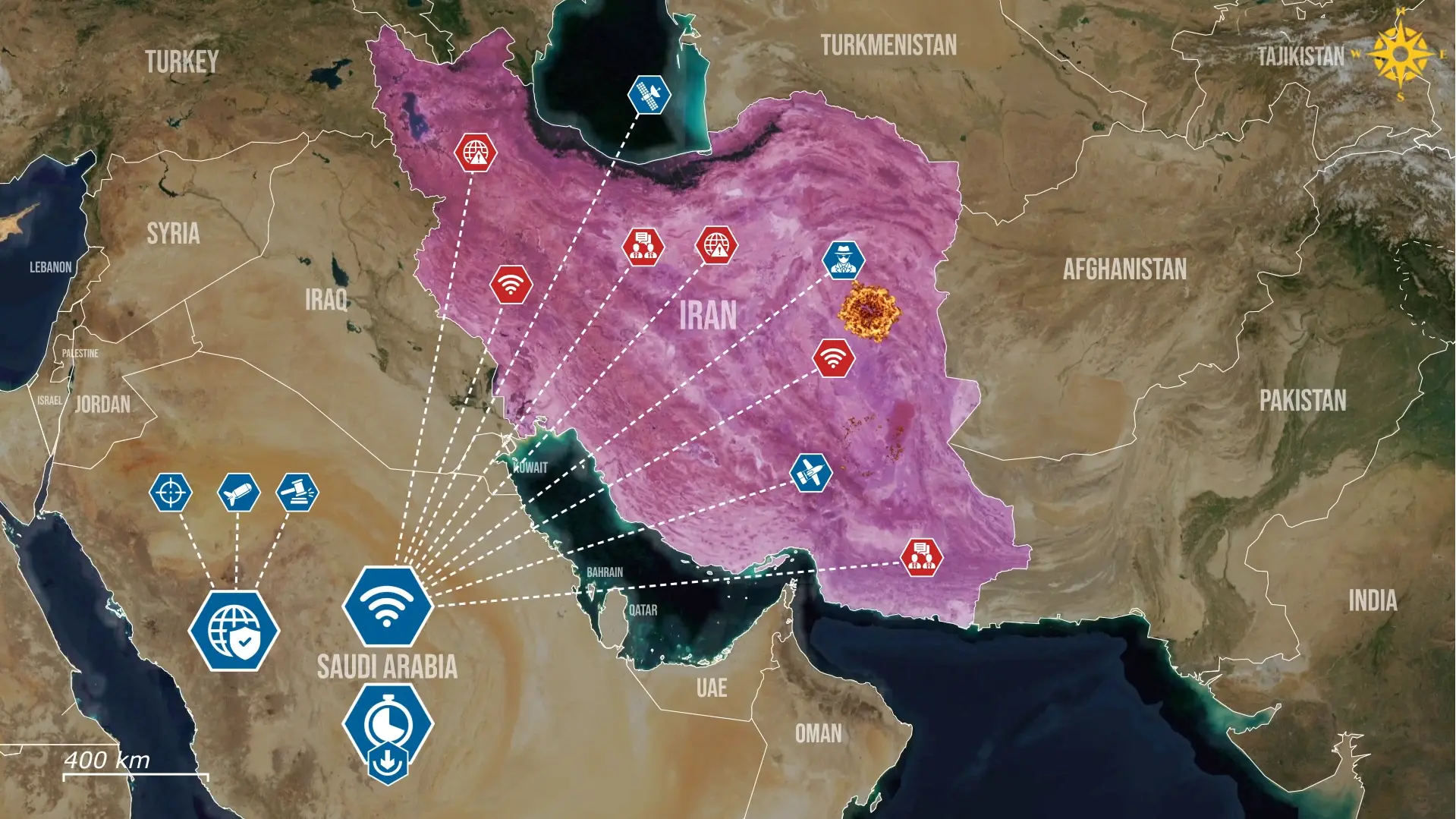

The result was a conveyor-belt of strikes that compressed the traditional kill chain and allowed the United States to overwhelm Iranian defenses before they could mount a fast and effective response.

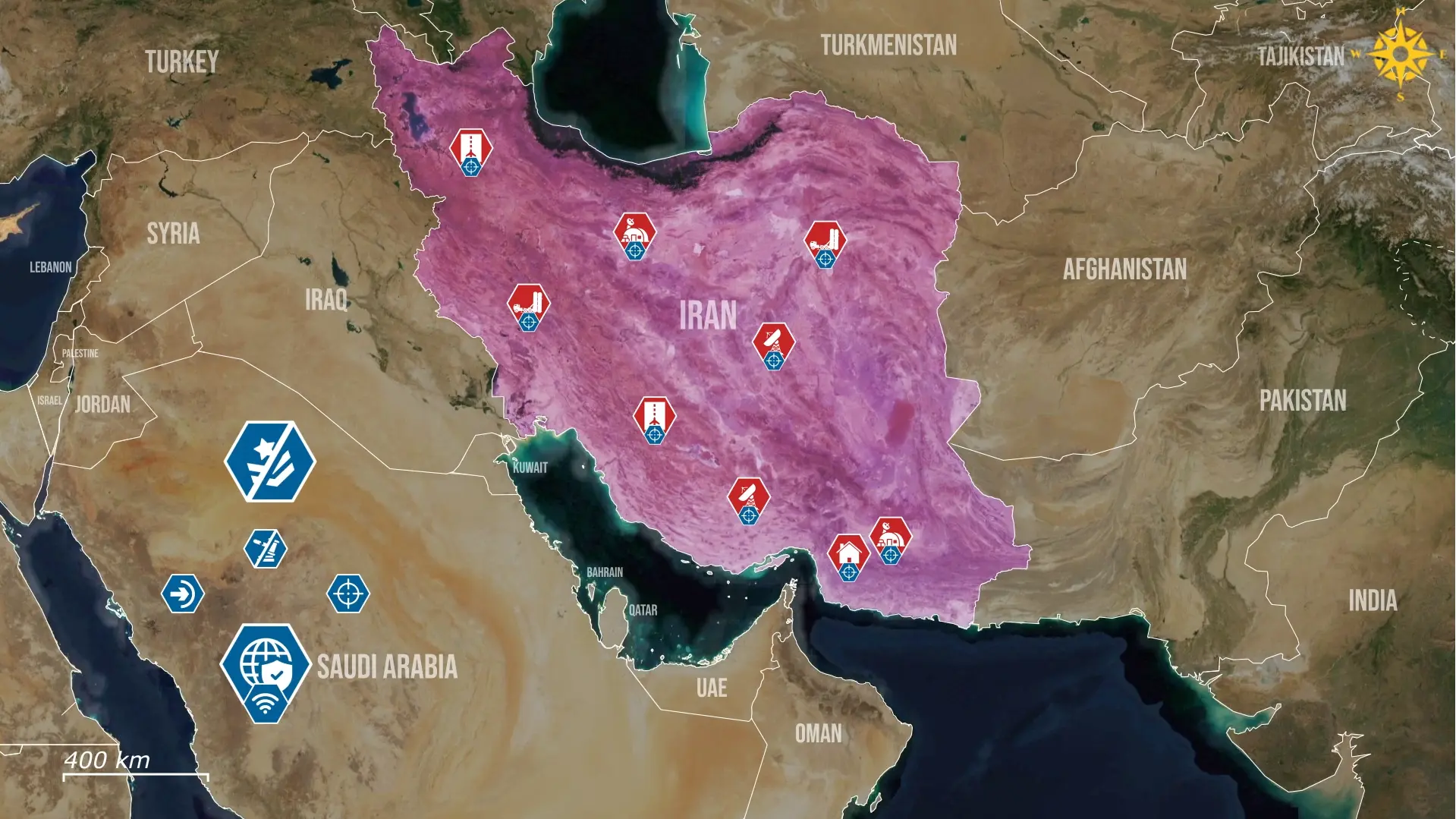

The Maven platform works as an aggregator, collecting data streams from satellites and drones, as well as additional intelligence data from phone calls, emails, and other electronic communications. Afterwards, Maven converts the received data feeds into structured, coherent information. At this point, the Claude model synthesizes this material into operationally usable outputs, including coordinates, recommended weapons, and even legal justifications aligned with the laws of war. This workflow reduces the time required for human analysts to interpret raw intelligence and transforms raw data streams into a constant flow of pre-packaged targeting packages that can be acted upon almost immediately.

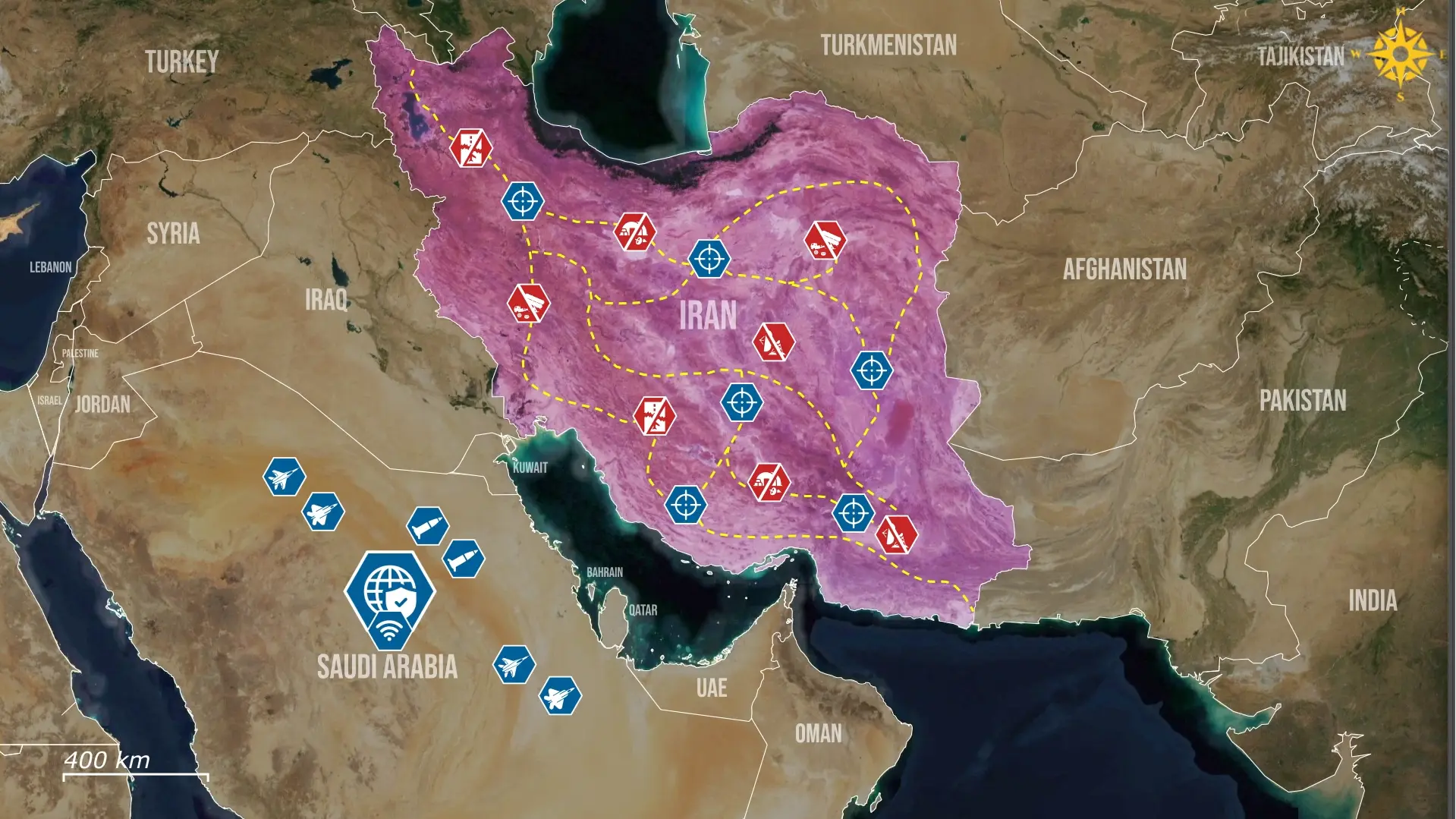

The integration of AI into targeting offers tactical advantages that redefine warfare. The first notable advantage is the scale of the available targets, as AI targeting can process data far faster than humans. This, in turn, enables a high strike rate that would be impossible with human analysis alone. Additionally, the AI-integrated system offers greater depth of available strikes by quickly identifying not only military assets but also logistical ones that might not be readily apparent despite their importance. These advantages change warfare considerably, as for a military like the US, the more limiting factor is no longer intelligence processing but the capacity to launch and sustain strikes.

Although the Pentagon formally states that humans retain the final decision power, the rate of machine-generated targeting proposals is pushing the decision-making process towards reliance on the AI algorithm. In fact, while officers officially still act as final reviewers who approve or deny strikes, they can be confronted with hundreds of AI-validated targets.

At the same time, they will be pressured to act quickly before the enemy can react. As a result, the officers' roles become increasingly limited to confirming many proposed targets rather than performing a personal, human, nuanced assessment.

However, while AI processes information faster than humans, it is not inherently smarter, and it can misinterpret patterns or misclassify civilian structures located near military assets. For instance, reports suggest that an AI-generated misidentification may have contributed to the bombing of a girls’ school next to Iranian military positions in the town of Minab. The possibility that an algorithm could have flagged the site as a warehouse or command node underscores the structural risk created when human oversight becomes little more than a formality. Errors also propagate quickly through automated systems, especially in speed-reliant operations where the pressure to approve targets is high. Maintaining rigorous human review is therefore essential to prevent misclassification from becoming an embedded feature of the targeting cycle.

Overall, the integration of AI into warfare is reforming the balance between speed, precision, and accountability, creating a system that can deliver large-scale effects with minimal human intervention. This transformation offers clear operational benefits, yet it also introduces new vulnerabilities tied to misclassification, overreliance, and the dilution of human judgment. The central challenge will be designing oversight mechanisms that keep pace with automated decision cycles rather than lag them. This becomes increasingly important as militaries expand the use of AI across more domains and legal accountability blurs.

.jpg)

Comments